SMYS 1 | Review Intelligence as a GTM Signal Layer

How Vinayak Mishra built a five-tool pipeline to extract buying signals from restaurant reviews for Slang AI

👋 Hi, it’s Rick Koleta. Welcome to GTM Vault - a breakdown of how high-growth companies design, test, and scale revenue architecture. Join 25,000+ operators building GTM systems that compound.

Show Me Your Stack, Episode 1: Review-Led GTM for Slang AI

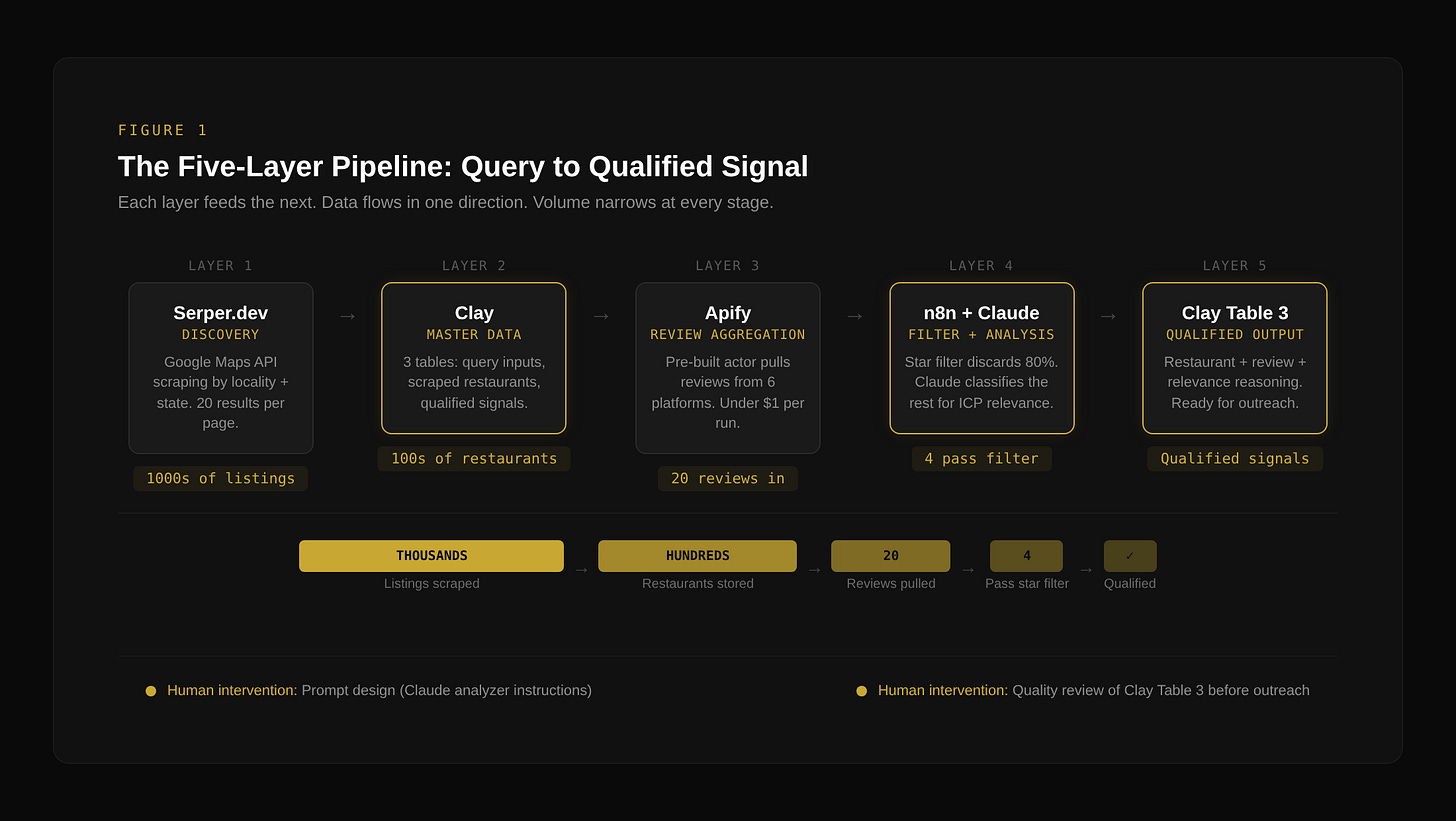

This episode: Vinayak Mishra walks through a restaurant review intelligence system built for Slang AI. Five tools. Five layers. Stage 2 orchestrated workflow. The breakdown below maps the build back to the Revenue Architecture.

The Targeting Problem Behind Review-Led GTM

Slang AI sells a voice AI reservation system for restaurants. The product solves a specific operational problem: restaurants that lose bookings because no one answers the phone during peak hours. The company recently closed a Series B round.

The GTM bottleneck is targeting. Slang AI needs to find restaurants that are actively struggling with phone and reservation communication. Not restaurants in general. Restaurants where the problem is already visible in the customer record. The question is where that signal lives and how to extract it at scale.

The answer is reviews. Customers leave complaints on Google Maps, Yelp, and TripAdvisor when they cannot reach a restaurant by phone, when reservations are lost, when special requests made during booking are ignored. These are not abstract intent signals. They are documented failures described in the customer’s own language, timestamped and geolocated. Every negative review mentioning phones or reservations is a qualified signal that the restaurant has the exact problem Slang AI solves.

This is not a lead list problem. It is a signal extraction problem. The system Vinayak built treats reviews as structured GTM data, not marketing noise.

Five Tools, Five Layers, One Direction

Five tools. Each one exists for a specific architectural reason.

Serper.dev serves as the discovery layer. It hits the Google Maps API to scrape restaurant listings by locality and state across the US. The query structure is simple: “restaurants in [locality], [state].” Each query returns 20 results per page. The tool exists because it provides fast, cheap access to Google Maps data at scale without building a custom scraper.

Clay operates as the master data layer. Three tables, each with a distinct function. Table one holds the raw locality and state inputs that generate Serper queries. Table two stores the scraped restaurant data (names, websites, place IDs, phone numbers, ratings). Table three receives qualified reviews pushed back from the orchestration layer. Clay is not a CRM in this architecture. It is the system of record.

Apify provides the review aggregation layer. A pre-built actor called “restaurant review aggregator” takes search keywords and location as input and returns reviews across Google Maps, Yelp, TripAdvisor, Facebook, UberEats, and DoorDash. The tool exists because building custom scrapers for six review platforms is not a productive use of engineering time when a managed actor handles it for under a dollar per run.

n8n is the orchestration and filtering layer. It receives review data from Apify via webhook, applies a star rating filter (three stars or below), passes filtered reviews to the Claude analyzer, and routes qualified results back to Clay. n8n exists because this workflow requires conditional branching (star filter, relevance check, true/false routing) that Clay alone cannot handle cleanly.

Claude performs the relevance analysis. A structured prompt instructs the model to act as a review analyst for Slang AI, evaluating each negative review against specific signals: restaurant unreachable by phone during busy periods, multiple unanswered calls, lost reservations, ignored booking requests. The prompt includes relevant signals, non-relevant signals, and edge cases. Claude exists in this stack because keyword matching would miss the semantic complexity of review language. “Called three times, no one picked up” and “tried to book for my daughter’s birthday but they completely forgot” both indicate the same structural problem, but no regex catches both.