The Three Stages of AI-Native GTM

The gap between generating subject lines and running pipeline on autopilot is not a better model, it is a different architecture

👋 Hi, it’s Rick Koleta. Welcome to GTM Vault - a weekly breakdown of how high-growth companies design, test, and scale revenue architecture. Join 25,000+ operators building GTM systems that compound.

Your SDR uses Claude to rewrite cold email subject lines. Your marketing lead pastes blog drafts into ChatGPT for editing. Your RevOps analyst asks an LLM to write a SQL query. Every team adopted the tool. Almost none of them changed the process around it.

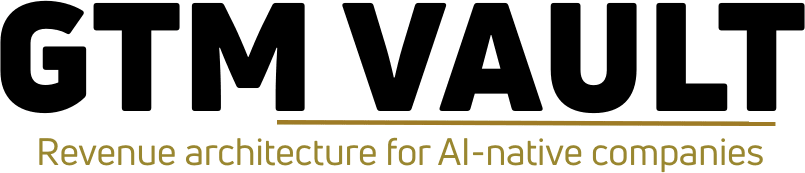

There are three distinct stages of AI integration in go-to-market execution. The gap between them is not incremental. It is architectural. And most teams are stuck at the first stage, measuring AI adoption by how many seats they purchased instead of how many manual steps they eliminated.

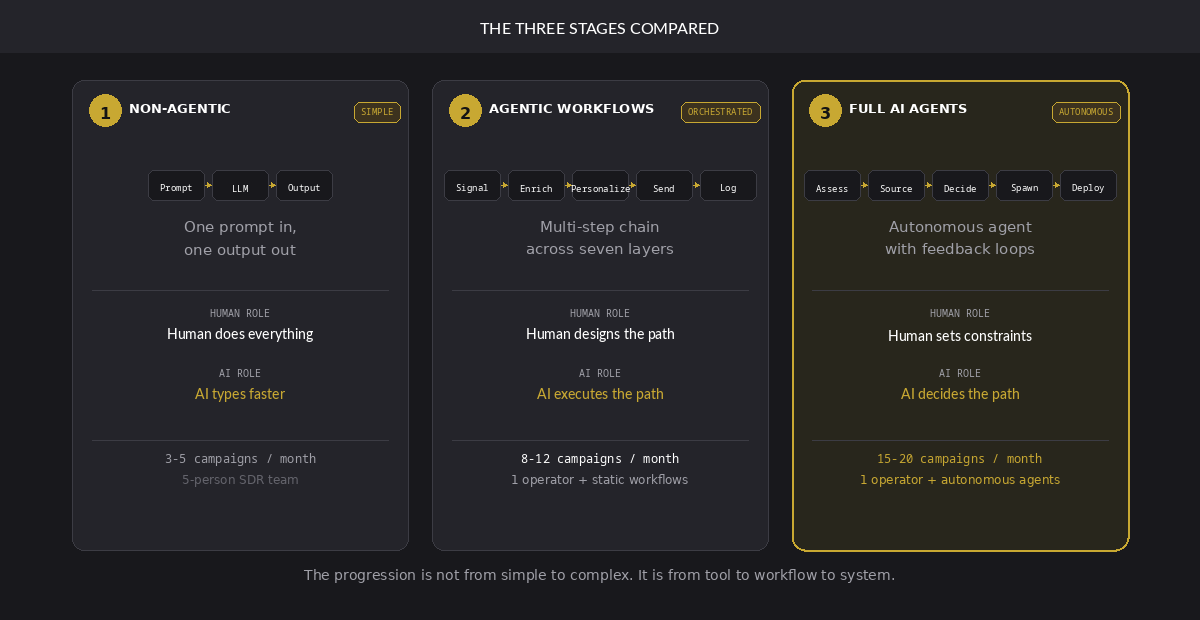

Stage 1 is non-agentic. One prompt in, one output out. The human does the thinking, the AI does the typing. Stage 2 is orchestrated workflows. Multi-step chains across signal detection, data enrichment, outreach execution, and CRM logging, with the operator designing the path and the system executing it. Stage 3 is fully autonomous agents. The AI reads pipeline state, identifies gaps, spawns subagents, runs campaigns overnight, and reports results in the morning. The operator sets the constraints. The agents run the operation.

The distance between these stages is not a product upgrade. It is a rebuild.

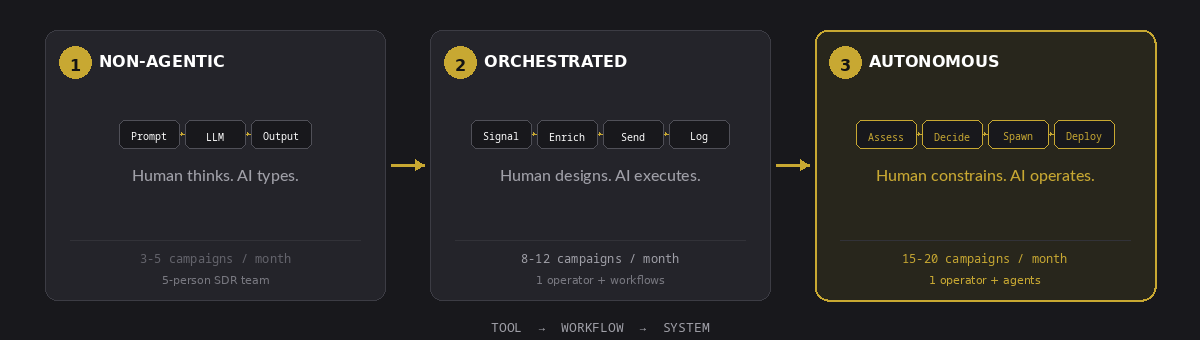

Level 1: Single Prompt, Single Output

The default mode. One person types a prompt. The model returns a response. No tools connected. No files referenced. No decisions made.

“Write 10 cold email subject lines targeting Series B SaaS founders.”

The model generates ten lines. The SDR picks three. Maybe A/B tests two. The process ends where it started, with a human doing all the thinking and the AI doing the typing.

This is where 90 percent of GTM teams operate today. The problem is not the output quality. The subject lines are fine. The problem is that every upstream and downstream step, identifying the ICP, sourcing the leads, enriching the data, personalizing the message, sequencing the send, measuring the reply rate, feeding the signal back into targeting, remains manual. The AI touches one node in a twelve-node process. The other eleven nodes are still duct tape and spreadsheets.

Level 2: Orchestrated Workflows

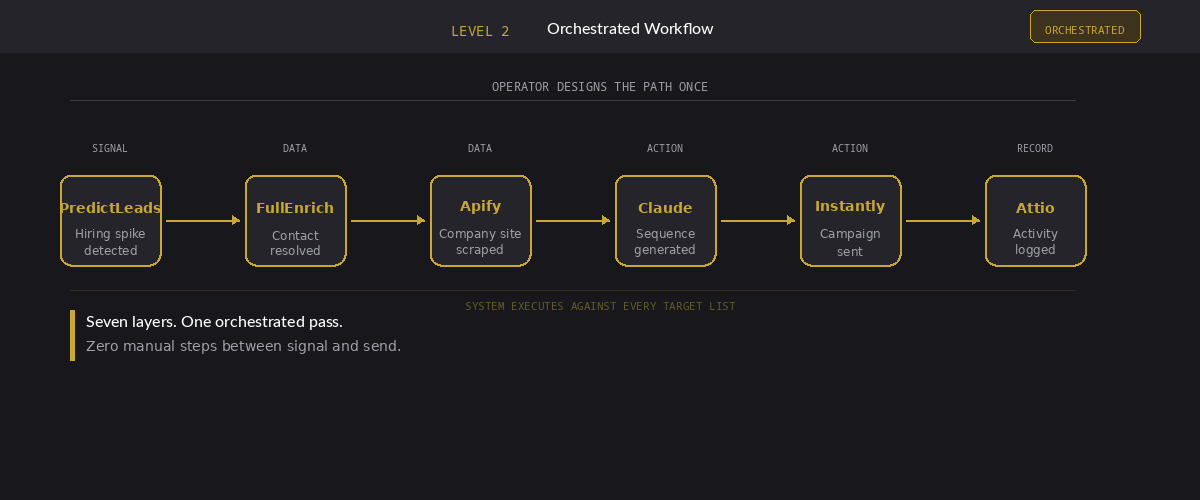

At Level 2, the AI is not answering questions. It is executing sequences.

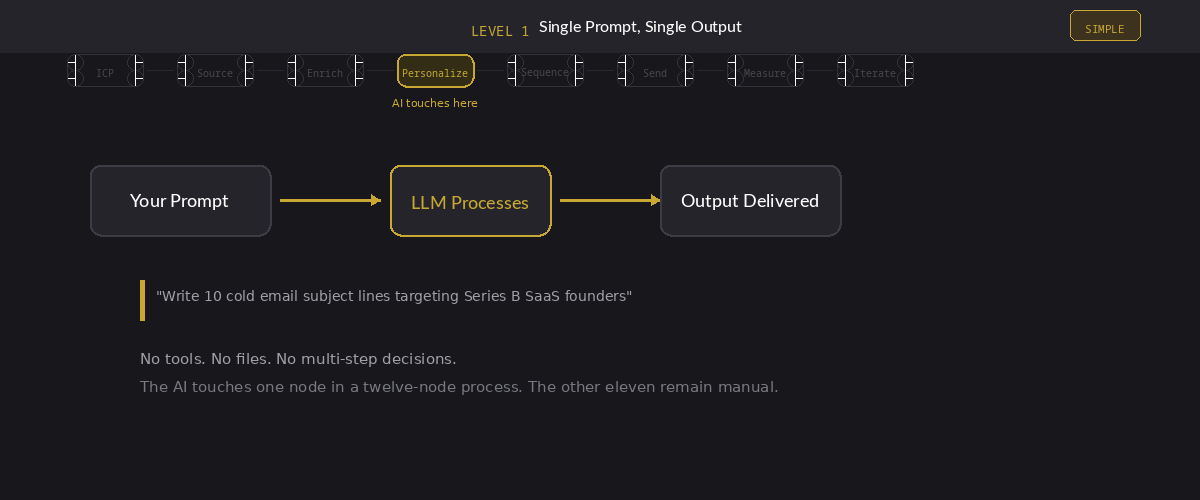

The infrastructure that makes this possible is not one tool. It is a layered stack where each layer feeds the next. Signal feeds data. Data feeds action. Action feeds the system of record. The system of record feeds back into signal. A modern AI-native GTM stack runs through seven distinct layers, each with its own API surface.

The signal layer detects buying intent before anyone fills out a form. Hiring spikes, funding rounds, technology adoption changes, repeat website visits, competitor mentions on sales calls. The architectural job of this layer is to surface accounts that are changing, not accounts that match a static firmographic filter. Tools like PredictLeads, Common Room, Attention, and RB2B each capture different signal types. The operator’s job is deciding which signals correlate with actual pipeline, not just activity.

The data layer resolves those signals into reachable people. This is where most stacks leak. A signal fires on a company, but nobody can find the right buyer’s verified email within the execution window. The signal ages out. The intent was real. The data infrastructure was not. The architectural decision here is not which enrichment provider to pick. It is whether to cascade across multiple providers (Prospeo, FullEnrich, Apollo, Wiza, Apify) until a verified channel is found, or accept the coverage gap and lose 20 to 40 percent of actionable signals before outreach even begins.

The action layer converts enriched contacts into live sequences. The structural question is channel routing: which contacts get which treatment. A VP of Engineering at a Series B company with three website visits in the last week does not belong in the same cold email blast as a director-level contact scraped from a conference attendee list. The action layer needs to receive routing logic from the layers above it, not apply a single sequence to every contact that enters the pipe.

The remaining layers complete the loop. An automation layer (n8n or equivalent) connects every API and manages sequencing. A system of record (Attio or whatever CRM the team runs) holds canonical state with real-time webhooks. A conversion layer turns replies into booked meetings with territory-based routing. And a revenue layer where attribution systems like Dreamdata close the feedback loop from first signal to closed deal.

A single Level 2 workflow chains across all seven layers without a human touching the handoff. The agent detects a hiring spike in PredictLeads, enriches the VP of Sales through FullEnrich, scrapes their company’s website through Apify for personalization context, generates a custom sequence, pushes it into Instantly, and logs the activity in Attio. One orchestrated pass. Zero manual steps between signal and send.

This is where the economics change. A Level 1 team needs an SDR to research each account, write each email, and log each touchpoint. A Level 2 team needs an operator to build the workflow once and then run it against every new target list. The marginal cost of the next campaign drops from hours to minutes.

But Level 2 has a ceiling, and it is lower than most teams expect.

The workflows are static. They run the path the operator designed. They do not adapt. If reply rates drop from 4 percent to 1.5 percent on a sequence targeting mid-market fintech, the workflow keeps sending. It does not know the signal quality degraded. It does not know that the ICP shifted after a competitor launched a similar product last month. A human has to notice the metric, diagnose the cause, redesign the workflow, and redeploy. That cycle takes days. In those days, the workflow burns through hundreds of contacts with messaging that no longer converts.

The other failure mode is brittleness. A Level 2 workflow is a chain. If one API goes down, if FullEnrich returns a timeout, if Instantly hits a deliverability throttle, the entire sequence stalls. There is no fallback logic unless the operator built one. Most do not. The system executes the path it was given. It does not route around failures. It does not think.

Level 3: Autonomous GTM Agents

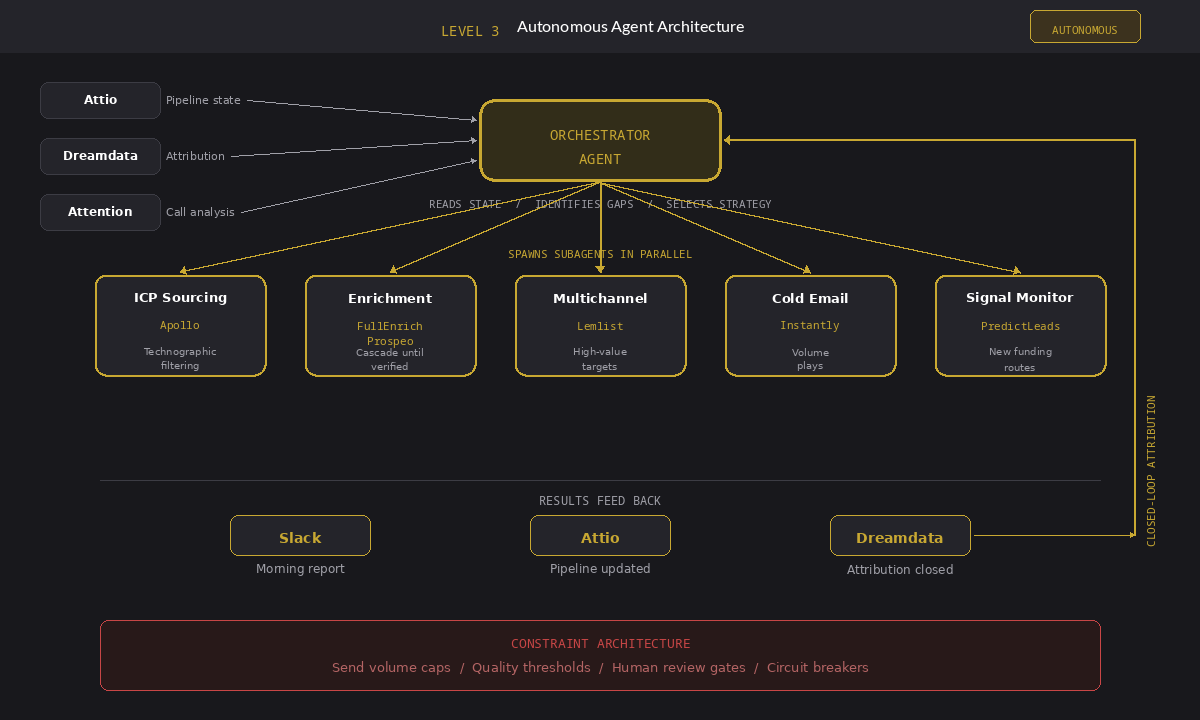

Level 3 is where the AI stops executing predefined workflows and starts making decisions about which workflows to run.

The agent queries Attio for the current pipeline state. It identifies gaps: not enough enterprise opportunities, too many stalled mid-funnel deals, low reply rates in the fintech vertical. It checks Dreamdata for attribution data on which channels are converting. It pulls Attention transcripts to find the objections that are killing deals. Then it decides what to do about it.

It spawns subagents. One pulls a fresh ICP list from Apollo filtered by the technographic signals that correlate with closed-won deals. Another enriches through FullEnrich and Prospeo in parallel, cascading across providers until every contact has a verified channel. A third builds multichannel sequences in Lemlist for high-value targets and cold email campaigns in Instantly for volume plays. A fourth monitors PredictLeads for new funding signals that match the target profile and routes them into the enrichment queue automatically.

The operator does not design the path. The operator defines the objectives, the constraints, and the feedback loops. The agent figures out the path.

This is not a theoretical architecture. Teams running at Level 3 today deploy through cron-scheduled jobs, headless SDK implementations, and GitHub Actions pipelines. The orchestration layer connects every API in the stack into a single feedback system. The agent reads the state of the pipeline, decides what to do about it, executes across multiple tools simultaneously, and reports what happened.

Here is what that looks like operationally. A five-person SDR team at Level 1 runs three to five outbound campaigns per month. Each campaign takes a week to research, write, personalize, and send. The team produces maybe 500 to 800 personalized touches per month. A single operator at Level 3 runs 15 to 20 micro-campaigns in the same period. Each micro-campaign targets a specific segment (Series B fintech companies that just hired a VP of Sales, mid-market SaaS companies showing intent on G2 for a competing product, enterprise accounts where a champion just changed jobs). Each one gets its own messaging, its own channel routing, its own send cadence. The agent builds, launches, and monitors all of them. The operator reviews results in Slack each morning, adjusts constraints when a campaign underperforms, and redeploys. Total active work: two to three hours a day. Total output: three to four times the volume of the five-person team, with tighter targeting on every campaign.

Not because the operator works nights. Because the agents do.

But Level 3 is not free of failure modes. It introduces a new class of them.

When subagents run overnight, the blast radius of a bad decision scales with the autonomy. A miscalibrated targeting filter does not waste one afternoon of manual research. It burns through a thousand contacts before anyone wakes up. An agent that optimizes for reply rate without weighting for deal quality will fill the pipeline with leads that never close, and the operator will not see the damage until the sales cycle plays out sixty to ninety days later.

The constraint architecture matters more at Level 3 than at any other stage. Guardrails on send volume per campaign, contact quality thresholds before a lead enters a sequence, mandatory human review gates for enterprise accounts, automatic circuit breakers when deliverability drops below defined floors. Without these, autonomy is not leverage. It is exposure. The difference between a Level 3 system that compounds and one that self-destructs is the quality of the constraints the operator defined before the agent started making decisions.

The Real Gap Is Not Technical

The distance between Level 1 and Level 3 looks like a technology problem. It is not. It is an architecture problem.

The APIs exist, nearly twenty of them cover the full path from signal detection to revenue attribution. The infrastructure is not the bottleneck.

Level 1 teams bolt AI onto existing manual processes. The process stays the same. The AI just types faster.

Level 2 teams redesign the process around tool orchestration. The workflow improves. But the decision-making stays human.

Level 3 teams architect a system where the AI operates within defined objectives and constraints, making execution decisions, allocating resources across channels, and adapting based on measured outcomes. The human sets the strategy. The system runs the operation.

Most teams never get past Level 1 because they treat AI as a feature, not as an infrastructure layer. They add it to a workflow instead of rebuilding the workflow around it. That is the same mistake GTM teams made with CRMs in 2010, with marketing automation in 2015, and with product-led growth tooling in 2019. The tool works. The architecture around it does not.

The question is not which AI tools to buy. It is at which stage your current architecture actually operates, and what you would need to change structurally to move to the next one. The answer is almost never a new vendor. It is a redesign of how the existing tools connect, what decisions are automated, and where the human review gates sit. That redesign is the work. Everything else is buying seats.