The Context Layer Is Becoming the Operating System of AI-Native GTM

Why MCPs are closing the fragmentation tax underneath the modern stack, and what changes when context carries across every agent, tool, and motion

👋 Hi, it’s Rick Koleta. Welcome to GTM Vault - a breakdown of how high-growth companies design, test, and scale revenue architecture. Join 26,000+ operators building GTM systems that compound.

Executive Summary

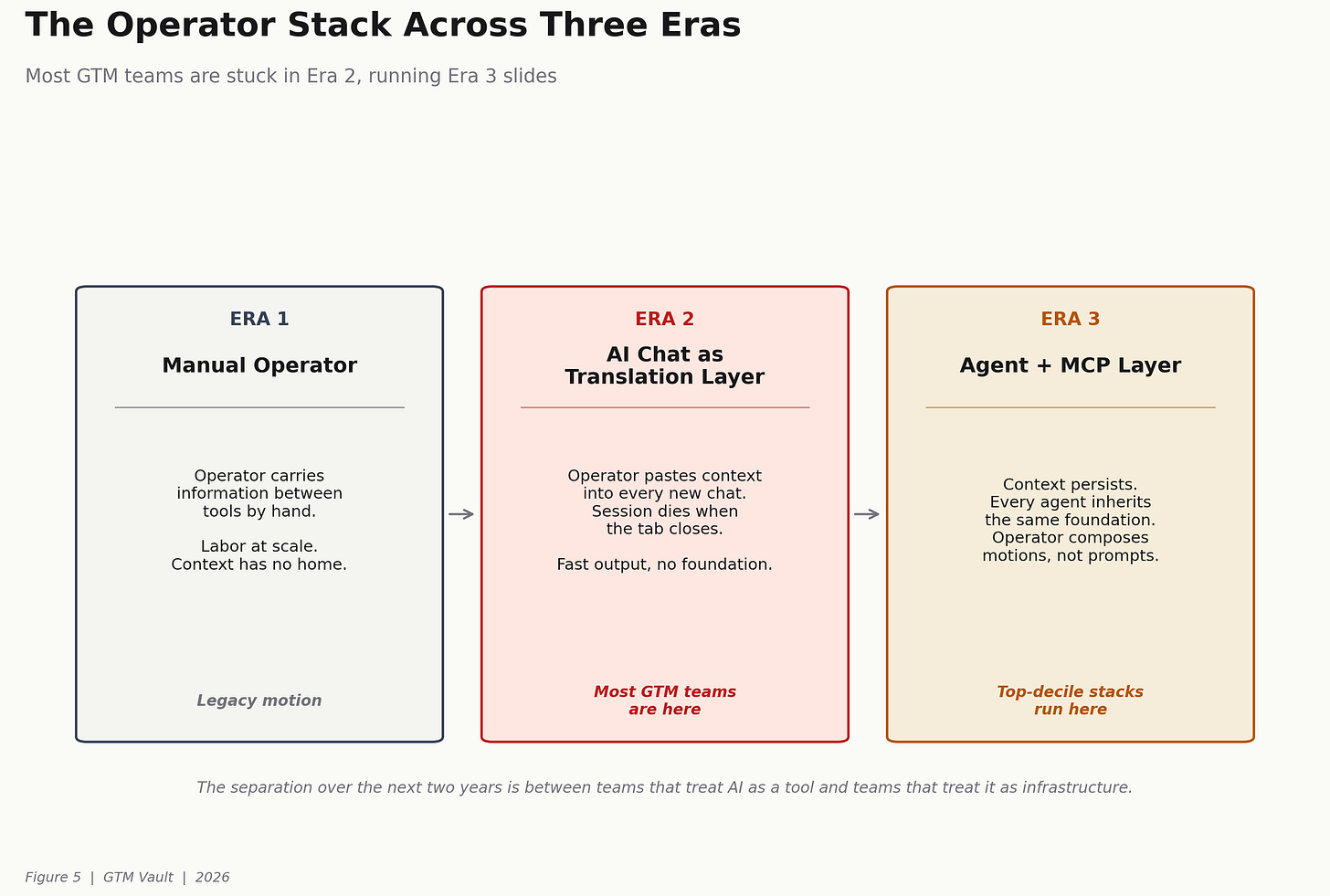

The GTM stack has been fragmenting for a decade. Most B2B companies now run six revenue motions across thirty-plus tools, managed by nobody in particular, reconciled by dashboards nobody trusts, and asked to produce leverage from an AI layer that starts every session at zero.

The bottleneck is not the models. It is not the tools. It is the context layer between them. Without one, every prompt is a cold start. Every agent is doing generic work on a generic description of the company. Every session dies when the tab closes.

Model Context Protocol (MCP) is the first piece of infrastructure that closes that gap. It is quiet. It is unsexy. It will not make a keynote. It is also the architectural inflection that separates the GTM stacks that will compound over the next cycle from the ones that will not.

This post establishes four structural truths.

The fragmentation of the modern GTM stack is a context problem, not a tooling problem.

MCPs convert the stack from a collection of tools into an operating system for the motion.

Five real stacks already operate on this pattern. The outcomes are measurable and the architecture is public.

Teams that install the context layer this year will compound at a rate teams on the fragmented stack cannot match.

What follows is the operator-level breakdown of each.

The Fragmentation Tax

Sangram Vajre captured the underlying condition in a line worth stealing: ask most B2B CEOs what their revenue motion is and they will say “we do a little bit of everything.” That is not a go-to-market strategy. That is channel accumulation dressed up as strategy. Industry research puts the average B2B company at six revenue motions in flight, most of them unmanaged.

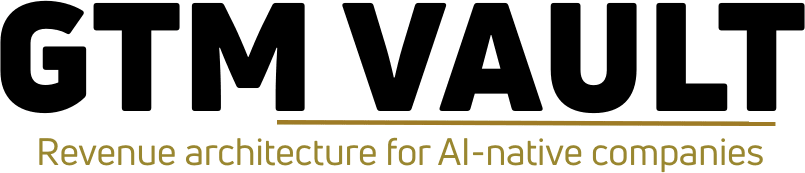

Each motion came with its own tooling. Outbound bought Apollo, Clay, Lemlist. ABM bought 6sense and Demandbase. Revenue ops bought Salesforce, Gong, and a data warehouse to reconcile the other two. Customer success bought Gainsight. Product bought Amplitude. Marketing bought HubSpot on top of Marketo on top of Mailchimp. Each tool solved the motion it was sold against and created a new fragmentation tax at the boundary between motions.

A side-by-side of the old playbook makes the texture concrete. Buy a static list from ZoomInfo or Salesforce. Load fifty thousand contacts into Outreach, Salesloft, or Marketo. Pay ten BDRs to grind eight-step cadences. Hope for a three percent reply. That is not a system. It is ten people manually carrying the context the stack should be carrying for them.

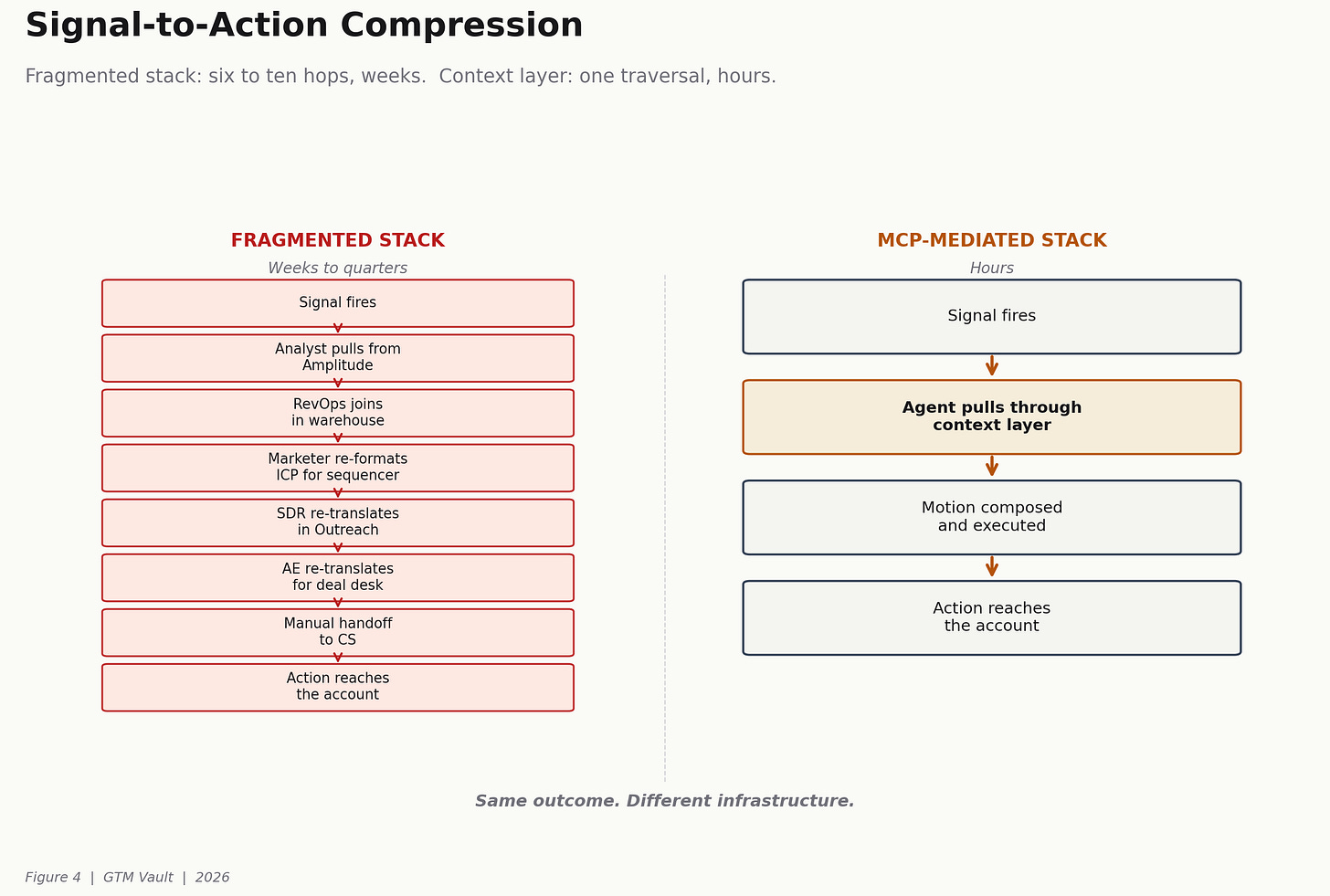

The tax compounds in three directions.

The first is a data tax. Every tool has its own schema. Reconciliation happens manually or through brittle scripts. Half the RevOps function exists to repair handoffs that should not break in the first place.

The second is a human tax. Every operator becomes a translation layer. The marketer formats the ICP for the sequencer, the SDR re-translates it for the caller, the AE re-translates it again for the deal desk. The same information passes through three re-interpretations before it reaches the account.

The third is a signal tax. Most of the observable intent in the stack is stranded inside a tool that cannot talk to any other. Product usage sits in Amplitude. Intent sits in 6sense. Renewal signal sits in Gainsight. Each is a rich source. None feeds the others.

The architectural point sits underneath all three. The bottleneck is not the models or access to tools. It is how well everything is connected, and how willing the company is to rewire itself when the market moves. AI layered on top of a fragmented stack does not fix the fragmentation. It amplifies it. An agent running on six to eight disconnected tools produces noise at scale, because every session starts at zero and the context it needs lives in places it cannot reach.

This is not a tooling problem. It is an architecture problem.

The Reframe: Context as Infrastructure

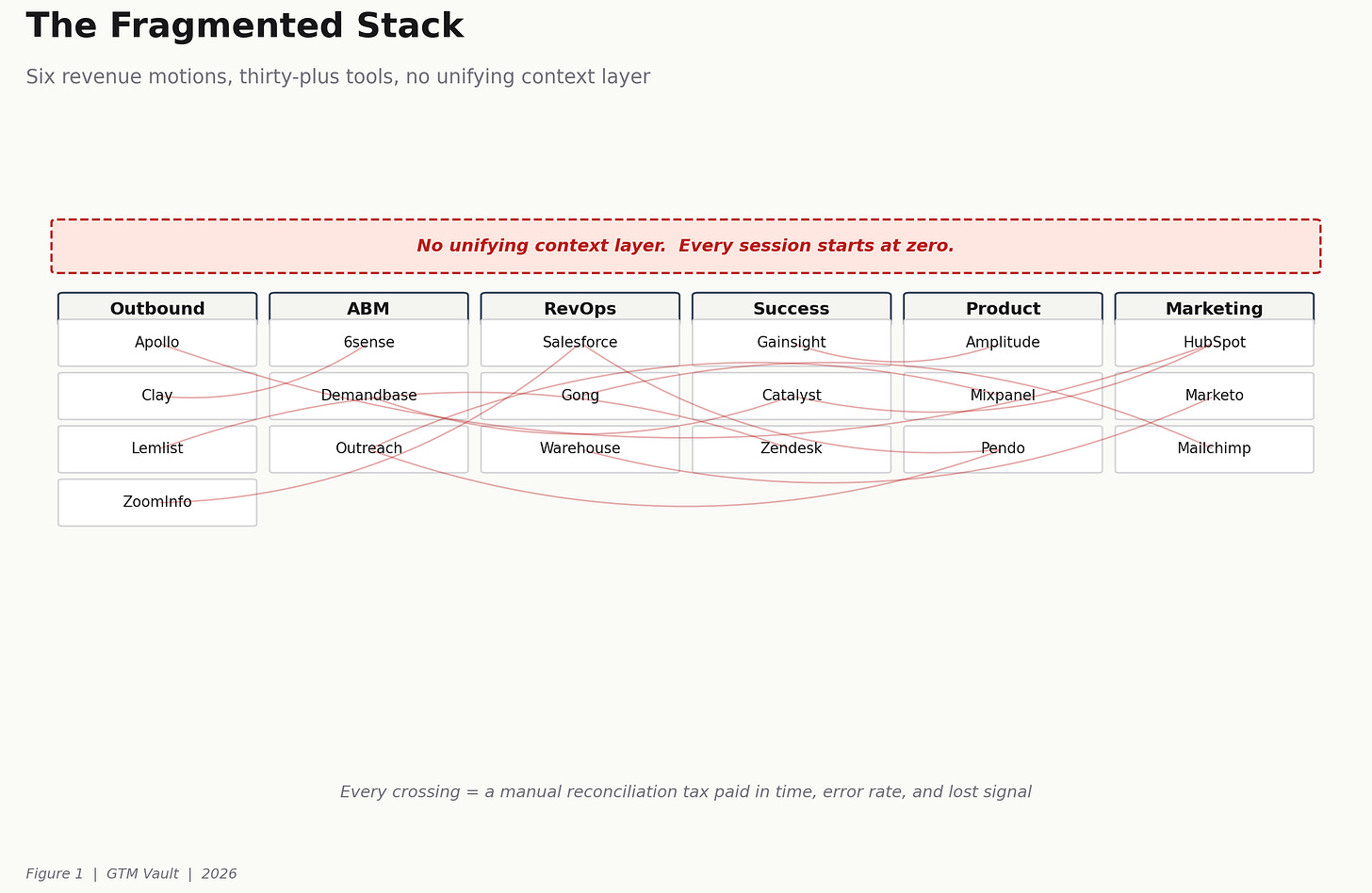

MCP is a protocol, not a product. It lets an agent talk to every tool in the stack through standardized servers. Pre-built servers exist for most tools a GTM team already owns. Custom servers can be built for the ones that do not.

What it replaces is mechanical. The copy-paste of context into every new chat. The tab-switching between CRM, data warehouse, calendar, and sequencer. The re-prompting of the same ICP every session. The generic output that comes from the model having no access to the company’s actual data.

Agents got the hype. MCPs got the adoption. The agent is the surface the operator interacts with. The MCP layer is the infrastructure that lets the agent act on the real stack instead of acting on a description of the stack.

Once the context layer is in place, the unit of work changes. An operator is no longer building prompts. They are composing motions. The outbound agent calls the CRM through an MCP, pulls the account, reads the last two quarters of product usage from the warehouse, checks the calendar for the next free slot, and drafts the follow-up. The surfaces do not matter. The context carries.

The structural consequence is the one that matters. When context persists across sessions and across agents, the operator stops owning the translation layer. The stack starts carrying the motion instead of the operator carrying the stack.

The Pattern in Production

Five stacks currently operating in the new architecture. Each one is public enough to verify and documented by the operators who built it. The common property is that context moves across the surface. Every one of them would collapse without it.

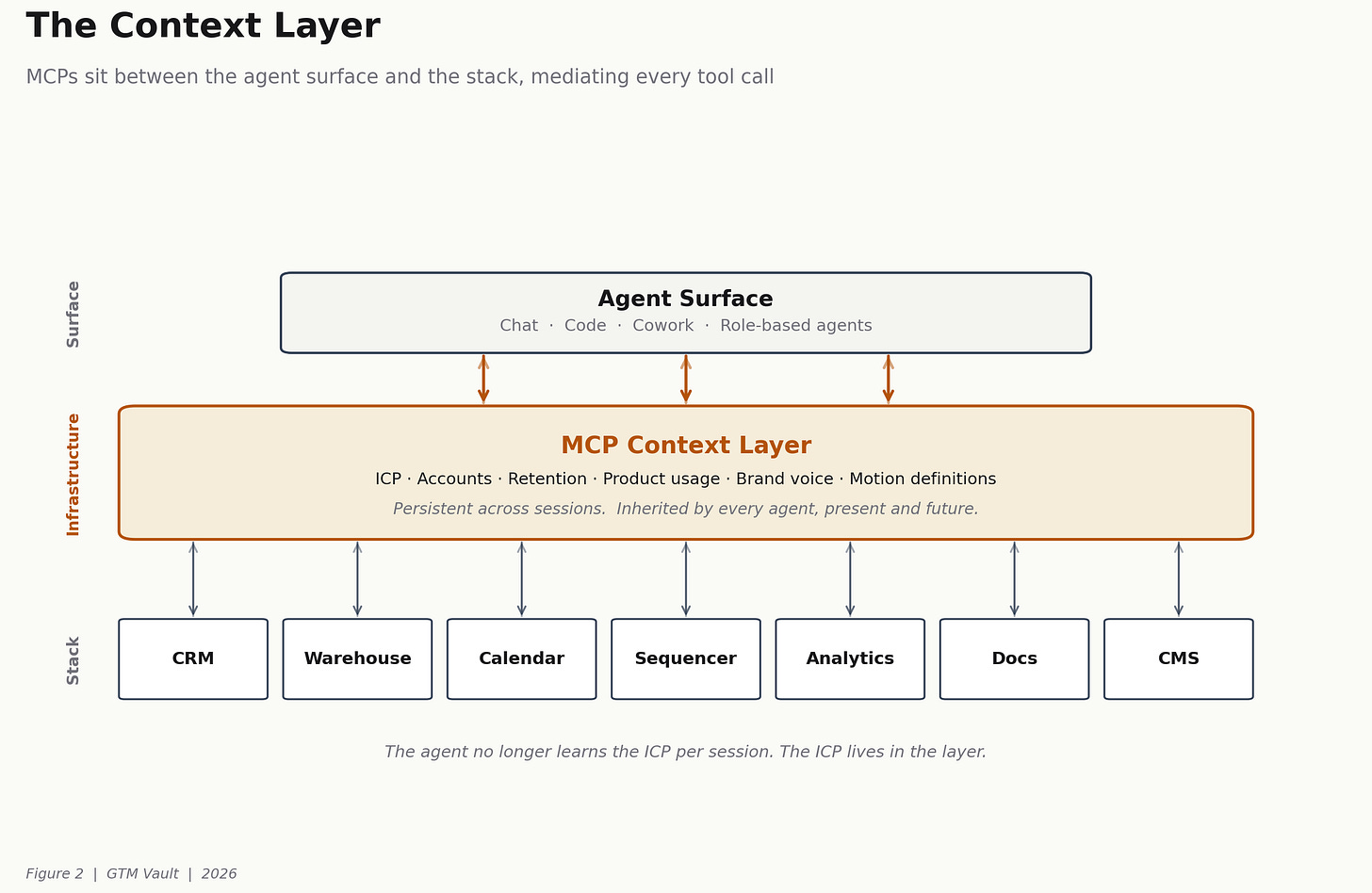

The sixteen-MCP reference stack. A recurring pattern across dozens of high-functioning B2B GTM systems: roughly sixteen MCPs organized by category (productivity, data, outbound, content, analytics). The architectural property is not the tool list. It is that the same context carries across every surface, so the agent is not re-learning the ICP on each call.

The role-based agent stack. Twenty-three opinionated tools structured as role-based agents. CEO. Designer. Engineering Manager. Release Manager. Doc Engineer. QA. The stack is the org chart, translated into an agent layer. Public GitHub repo. Clonable. The pattern worth noticing is that the agents are defined by their function, not by the tool underneath them. That is the operator shift.

The open-sourced GTM engineering playbook. Production-grade GTM engineering systems built for Linear, Descript, and Canva, open-sourced as a public GitHub repo. This is the first time production GTM engineering has been cloneable. Before this, the playbooks lived in internal Notion pages and in the head of the operator who built them. Now they live in a repo anyone can fork.

The high-density AI-native stack. $30M revenue target with under eighty people. Seventy-eight tools used weekly, tested down from three hundred and fifty. Signal-driven across every function, from prospect enrichment to delivery ops. The case is interesting not because of the tool count but because the tool count works. Density is a feature when the context layer holds. On a fragmented stack, seventy-eight tools is chaos. On a unified context layer, it is specialization.

The motion-first rebuild. Sixty meetings per month booked on autopilot without adding headcount. Eight structural shifts, starting with hybrid AI SDRs that augment humans rather than replace them. The architectural claim inside the case is the one to notice. The rebuild was of the GTM system, not the tool stack. The tools changed because the system changed first.

Five stacks. Five different company shapes. The common property is that context moves across the surface. More stacks in this pattern are documented weekly in Show Me Your Stack.

What Compression Looks Like in Practice

Three public cases of signal-to-action compression, each one measurable and each one dependent on the context layer working. Without the context layer, none of these three motions produce these outcomes.

$1M to $10M ARR in nine months. Interviews with more than a dozen AI-native founders across late 2025 and early 2026 show a consistent pattern. The compression from $1M to $10M ARR now runs under nine months, against a historical baseline of twenty-four-plus months for IPO-caliber SaaS. The compression is not explained by better product or more capital. It is explained by the motion, the content layer, and the outbound engine running on one context substrate instead of three.

ABM meetings with AWS, a16z, Harvey, and Anthropic in a single quarter. A seven-play Clay-based ABM motion produced Q1 meetings with AWS, a16z, HG Insights, Harvey AI, and Anthropic. The filter did the work. Target by challenge type, not firmographics. Same company size does not mean same problems. The play is possible because Clay, enrichment, and messaging share context through the stack. Without that, the filter is just another manual step.

A complete marketing rewrite in four hours. Four hours inside one agent surface rewrote a growth-stage company’s entire marketing function. Step one: find the golden segment using Stripe retention data. Step two: learn what made that segment different. Step three: rewrite every piece of acquisition to attract only that segment. The four hours did not compress because the operator was typing fast. They compressed because the context needed (payments, retention, product usage, segment signal) was all reachable from one agent surface.

The through-line across these results is not speed. It is that signal-to-action compression stops being heroic. The same rewrite, the same ABM play, and the same outbound rebuild used to take a team a quarter. The stack with the context layer makes it a week.

The Operational Shift

Most operators skip the basics and jump to tactics. Tools. Channels. Hacks. Messaging clarity and ICP clarity come later, if at all. The result is a team running fast on a foundation that was never set.

The AI layer makes that worse in the old architecture and better in the new one. On a fragmented stack, AI ships fast output with no foundation. On a stack with MCPs, the foundation is encoded once and every agent inherits it. The ICP definition lives in one place. The retention signal lives in one place. The product usage lives in one place. Every agent that touches the stack starts from that foundation instead of rebuilding it.

Anthropic’s Claude Managed Agents is the inflection that makes this mechanical. Persistent context across sessions. Sub-agents running in parallel under a single orchestrator. Built-in error recovery. The infrastructure is catching up to the architectural premise that an agent without context is a very expensive prompt loop.

The shift for the operator is straightforward. Stop thinking about the stack as a collection of tools. Start thinking about it as an operating system for the motion. The tools are interchangeable. The context layer is not.

What Is Load-Bearing

Three structural claims carry the weight of this post. If any of them is wrong, the rest of the argument collapses.

Fragmentation is a context problem, not a tooling problem. Every stack consolidation effort of the last decade (CDPs, data warehouses, reverse ETL, headless CRMs) attempted to solve it at the data layer and underperformed. The reason is that the unit of fragmentation is not data. It is context. Data can be centralized. Context has to be composable across agents. MCPs are the first protocol that treats it that way.

The agent is a surface. The context layer is the infrastructure. Teams that buy agents without building the context layer end up with a more expensive version of the problem they had. The agent amplifies whatever is underneath it. On a fragmented stack, that is noise. On a context layer, that is leverage.

The operator’s unit of work is shifting from prompts to motions. The team that still runs on prompts is running manual labor dressed up as AI. The team that runs on composed motions is running infrastructure. The first one hits a ceiling at scale. The second one compounds.

What to Install This Quarter

Map your stack by role, not by category. Which agent owns the outbound motion. Which agent owns renewals. Which agent owns the content layer. If nobody owns the motion, no tool in the stack will fix it. The first deliverable is an org chart of the agent layer, drafted before any MCP is written.

Identify the three places your team starts every session at zero. Those are your MCP targets. The ICP that gets repasted into every chat. The account context that never crosses the seam between CRM and warehouse. The product usage signal that lives in Amplitude and never reaches the sales team. Those are the three surfaces that need a context layer first. Start there.

Pick one motion (outbound, onboarding, or renewals) and rebuild it with a single persistent context layer. Measure conversion before and after. Teams that have done this have compressed multi-week motions into multi-day motions. The conversion lift is a side effect. The real prize is that the motion now runs without the operator who built it.

Kill the tools that exist to compensate for bad context. Most of them go away once the context layer works. The reconciliation scripts. The manual enrichment layers. The dashboards built to patch over the gap between two tools that do not talk. That is not a feature of your stack. It is evidence of what the stack is missing.

Run a context audit every quarter. The stack will drift. Agents will accumulate. New tools will ship. Without a deliberate review, the context layer will fragment faster than it can consolidate. Treat context drift the way a platform team treats tech debt. Name it. Track it. Retire the pieces that no longer carry weight.

Why This Matters

Software is no longer defensible at the code layer. Features ship in a weekend. A competitor clones the product in two. What compounds is how the system connects, how fast the team rewires it when the market moves, and how much of the company’s operating knowledge is encoded in a place an agent can reach.

The Figma week was the clearest preview of what that means in practice. On a Monday, Anthropic’s CPO resigned from Figma’s board. The same day, news broke that Claude’s next model would ship with design tools. By Thursday, Anthropic launched Claude Design. Figma closed down 7 percent. Adobe, Wix, and GoDaddy took hits in the same window. The product reads the codebase, applies the brand system automatically, and hands finished work to Claude Code for production. The application layer that took Figma a decade to build was reabsorbed into the model layer in four days. That is the dynamic every B2B company built on a foundation model is now operating inside.

MCPs are the first piece of infrastructure that makes the response to that dynamic mechanical rather than heroic. The defensibility moves from the product to the system around it. Teams that install the context layer this year are the teams running the same motion at five times the velocity twelve months from now. Teams that stay on the fragmented stack will be the ones still pasting the ICP into every new chat, wondering why AI is not producing the leverage the slide deck promised.

This is not a tooling cycle. It is an architectural cycle. The separation over the next two years is between teams that treat AI as a tool and teams that treat it as infrastructure. The difference is the context layer. It is already in production at the companies that will compound hardest.

Sources and Further Reading

The architectures, cases, and findings above are grounded in the work of operators who are publishing their systems in public. The list below is the subset whose work directly shaped this post.

Sangram Vajre, GTM Partners. The “little bit of everything” observation and the six-motions data point.

Ben Carden, RevenueFlow. Side-by-side of the legacy outbound stack and the AI-native rebuild.

John Hurley. The AI Transformation Model and the architectural framing that connection speed is the bottleneck.

Maja Voje, GTM Strategist. The “agents got the hype, MCPs got the adoption” framing, the sixteen-MCP reference stack, the seven-play Clay ABM motion, and the Revenue Architects playbooks share.

Garry Tan, Y Combinator. The gstack GitHub repo, twenty-three opinionated tools structured as role-based agents.

Nico Druelle and Karl Rafidimanana, Revenue Architects. Open-sourced GTM engineering systems for Linear, Descript, and Canva.

Dan Rosenthal. The $30M AI-native services stack running seventy-eight tools weekly under eighty headcount.

Tim Carden. The motion-first rebuild producing sixty meetings per month without adding headcount.

Kyle Poyar, Growth Unhinged. Interviews with AI-native founders documenting the $1M to $10M ARR compression.

Zayd Syed Ali, Valley. The four-hour marketing rewrite using payments, retention, and segment signal through a single agent surface.

Brigitta Ruha. The observation that most operators skip the basics and jump to tactics.

Show Me Your Stack. Weekly documentation of production AI-native GTM stacks across B2B companies.

Anthropic. Claude Managed Agents announcement and the Figma week coverage referenced in the closing section.